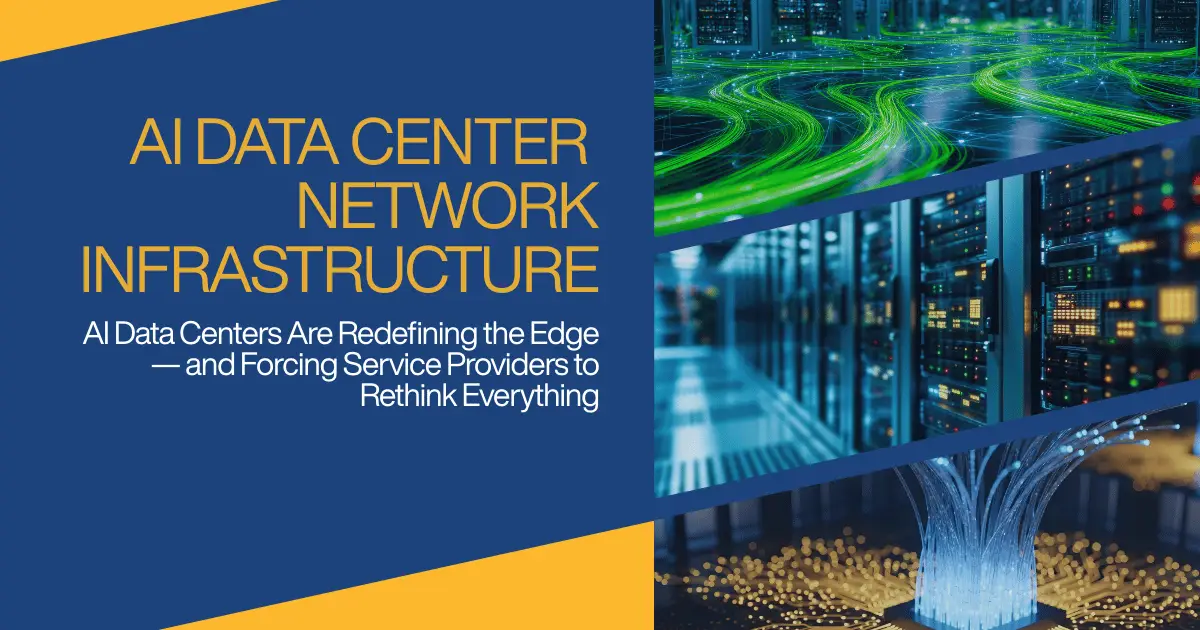

AI Data Centers Are Redefining the Edge — and Forcing Service Providers to Rethink Everything

AI data center network infrastructure is changing the rules of infrastructure design, and in the process, it is separating service providers into two camps: those who become strategic AI partners or those reduced to commodities.

Texas is experiencing a major surge in AI data center network infrastructure development, with over 400 facilities and 442+ more planned or currently under construction as of late 2025. Key expansions include a massive $40 billion Google investment in new campuses in Armstrong/Haskell counties and OpenAI’s first Stargate project in Abilene. There are multiple developments across the state. Houston, Dallas/Ft. Worth and San Antonio are all experiencing a major surge in AI-related development. Apple recently announced a new project for Houston.

“With Apple making the announcement of a 250,000 sqft Houston factory that will produce servers for its data centers to support the company’s artificial intelligence (AI) business, it is showing Houston is the hub for the development of data centers.” Greater Houston Partnership

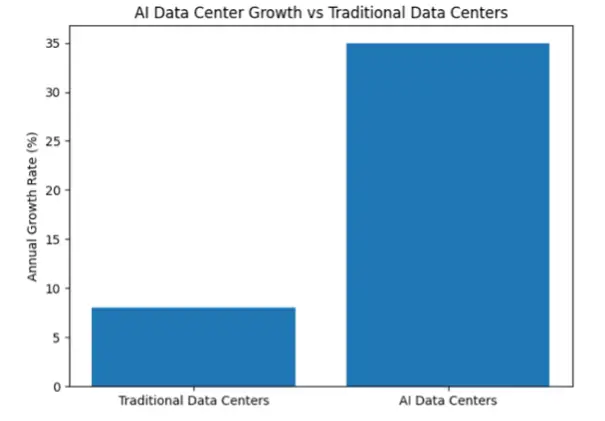

AI Data Center vs Traditional Data Center Growth

How quickly could your network restore full performance if your AI customers lost connectivity due to an outage—minutes, hours, or days?

That question is no longer theoretical.

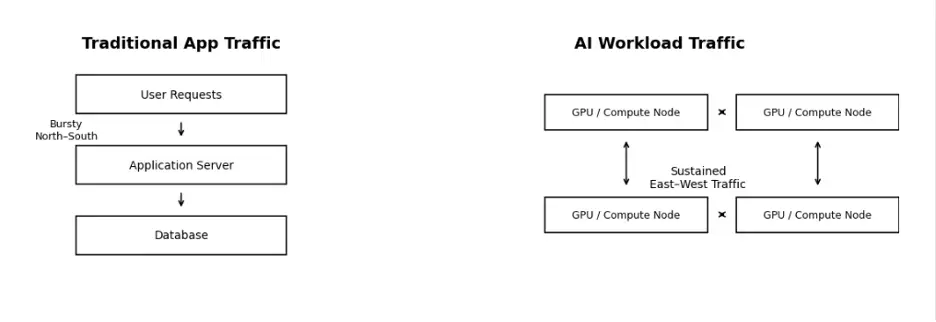

The networks that powered yesterday’s cloud workloads are now being pushed to their limits by AI training clusters, distributed inference, and relentless east-west traffic. There is an architectural shift underway. The network reliability has become the primary focus.

AI is reshaping telecommunications infrastructure: 71% of IT leaders say their data centers can’t yet meet today’s AI demands, and 88% plan to expand capacity – on-prem, in the cloud, or both.

Here is a quote from Chintan Patel, CTO and VPSE at Cisco EMEA, on the importance of these decisions. “IT leaders know the network they build today will shape the business they become tomorrow. Those who act now will be the ones who lead in the AI era.”

AI is no longer experimental. It is infrastructure. A rapidly growing share of new data center capacity is being built specifically for AI workloads. But computing alone doesn’t deliver AI value. Connectivity does.

This is a pivotal moment for service providers:

- Secure networking in mission-critical.

- AI intensifies the demand for resilient networks.

- Network reliability directly impacts customer revenue, for example: One severe outage per business per year adds up to $160B globally.

AI Workloads Don’t Tolerate Network Excuses

Speed isn’t the only consideration. AI data center network infrastructure must provide extremely low latency with no packet loss and be able to scale to connect hundreds or thousands of xPUs. Modern AI workloads require 400-800 gigabits per second (Gbps) of bandwidth per GPU, far exceeding standard enterprise network capabilities. It is:

- Constant, not bursty

- Lateral network heavy, reducing the reliance on traditional 3-tier architectures.

- Extremely sensitive to jitter, latency, and packet loss

A disruption that might go unnoticed in a conventional application but can derail AI training jobs, idle expensive GPUs, or degrade inference accuracy.

“AI doesn’t fail gracefully — it fails disastrously.” Stephen Collins, Sr Software Engineer

For service providers, this exposes a hard truth: Even the best networks break under pressure. The recent Verizon outage is an example of how important network resiliency is.

As Brian Newman, Strategic Advisor, states in his LinkedIn article: “When the network fails, AI does not degrade gracefully. It Stops. Navigation fails, Authentication breaks. Safety systems lose awareness. The problem is not the AI model. It is the infrastructure beneath it.”

The Competitive Divide Is Forming Now

The battle for broadband supremacy, once defined by download and upload speed, will be fought on a new, more demanding front: The enablement of AI. This pivot redefines the core product. The fight is not for the casual browser but the power user.

Major players are becoming aware of this shift, and their strategic announcements and capital allocation plans reflect a clear alignment toward capturing the AI-enabled future.

To stay relevant:

- Develop edge architecture

- Plan fiber and wavelength together

- Focus on restoration speed from day one

In the AI era, connectivity isn’t a utility — it’s a differentiator.

So, here’s the question every service provider should be asking:

When your customer’s AI workload is under pressure, does your network simply function, or does it help them outperform their competition?

Because in this market, the answer defines who leads…

and who gets left behind.

Sources used:

(Google link – https://datacenters.google/locations/texas/ )

(OpenAI link – https://www.cnbc.com/2025/09/23/openai-first-data-center-in-500-billion-stargate-project-up-in-texas.html)

(Greater Houston Partnership – https://houston.org/events/houstons-ai-driven-data-center-boom-investment-innovation-and-policy/)

Quotes:

(Chintan Patel, CTO and VPSE at Cisco EMEA – https://newsroom.cisco.com/c/r/newsroom/en/us/executives/jeetu-patel.html)

(Stephen Collins, Sr Software Engineer – LinkedIn – https://www.linkedin.com/in/stephen-collins-2b8207aa/)

(Brian Newman LinkedIn article: https://www.linkedin.com/posts/briancnewman_ai-edgecomputing-telecom-activity-7417884752822714368-Jl_E/)